Introduction

Autonomous vehicles and advanced robotics depend on machines being able to interpret the physical world. To train those systems, companies collect large amounts of raw data from cameras, LiDAR, and other sensors. That data then has to be labeled so models can learn to identify objects, movement, distance, road conditions, obstacles, and other real-world signals accurately.

This is where data-labeling companies come in. In autonomous vehicles and robotics, their role is not limited to tagging a few images and sending them back. It is also about ingesting, organizing, retaining, and retrieving massive datasets over time.

| Step | What it means in practice |

| Ingesting | Receiving large raw datasets from autonomous vehicle or robotics teams, such as road video, LiDAR files, camera feeds, and other machine-captured data |

| Organizing | Sorting the data so it can actually be worked on, for example by vehicle run, location, sensor type, scene, project, edge case, or labeling status |

| Retaining | Keeping the data after the first labeling pass because it may still be needed later for QA, relabeling, retraining, audits, or rare edge cases |

| Retrieving | Pulling older data back when needed, such as revisiting nighttime pedestrian clips or checking the original raw sequence behind a completed label set |

Seen this way, autonomous vehicle and robotics labeling is not just an annotation task. It is also a data-handling and storage problem. In this blog post, we will spotlight three realities shaping this segment:

1. Autonomous vehicle and robotics labeling involves much larger and heavier datasets than many people assume

2. Labeling video, LiDAR, and multimodal data is more complex than standard annotation workflows

3. At scale, archived data becomes a trust problem, not just a storage problem

And we will close with where Filecoin fits in this stack, and why it becomes relevant for retained data that still needs to remain durable, economically retrievable, and trustworthy over time.

1. The datasets are much larger and heavier than they first appear

The first thing to understand about autonomous vehicle and robotics labeling is that the workflow begins with a large volume of raw machine-generated data. Before any labeling work begins, teams already have to handle huge quantities of video and sensor data that need to be uploaded, organized, and prepared for review. At that point, the challenge is no longer just annotation. It is also the operational work of moving and managing heavy datasets from the start.

Autonomous vehicles (AVs) are evolving into mobile computing platforms, equipped with powerful processors and diverse sensors that generate massive heterogeneous data, for example 14 TB per day.” – AVS paper by arXiv, November 2025

This is not just a theoretical concern. Rivian, an electric vehicle maker developing autonomy features, has already described the problem in operational terms. In a 2025 AWS case study, AWS said Rivian’s data-collection test fleet generates terabytes of sensor and camera data every day, creating a real challenge for upload, storage, and processing.

BMW offers another example of what happens when storage cost becomes part of the workflow. In 2025, AWS said it worked with BMW on a petabyte-scale automated driving data lake and built a way to identify recordings for faster archiving based on access patterns. The point was not just to store more data, but to move less-active recordings into cheaper archival storage sooner, potentially within days of arrival rather than waiting for the standard 30-day transition period.

That matters because even before labeling, QA, or reprocessing begin, the scale of the raw data is already substantial. For teams working in autonomous vehicle and robotics workflows, the scale of the raw data alone starts to push the work beyond lightweight annotation and into a storage-heavy data operation.

2. Video, LiDAR, and multimodal labeling are harder than standard annotation

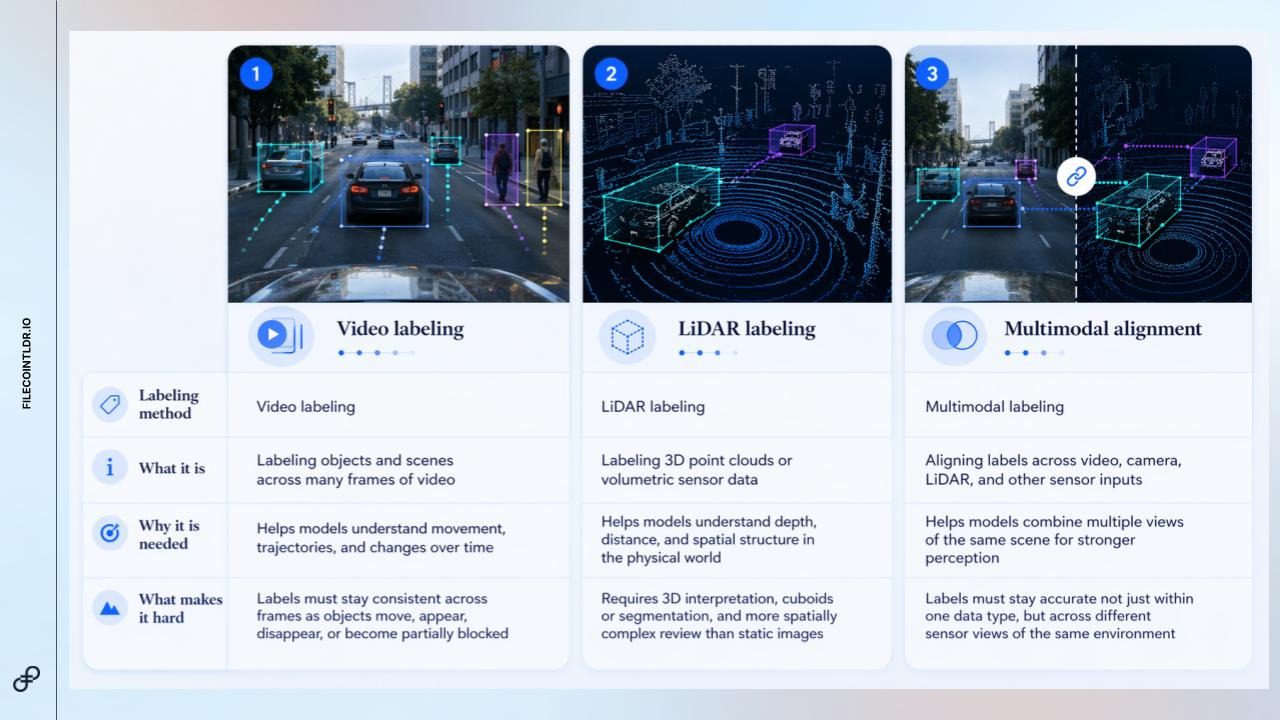

The second reality is that “labeling” in autonomous vehicle and robotics workflows is not one single task. Different systems require different types of labels depending on what the model needs to learn. Some tasks involve identifying objects across long video sequences, others involve labeling three-dimensional LiDAR data, and others require multiple sensor views of the same scene to be aligned together.

Taken together, these methods make the work more demanding than standard annotation. Video requires consistency across time. LiDAR requires spatial understanding in three dimensions. Multimodal workflows require labels to remain aligned across different sensor views of the same environment. For example, Waymo’s public perception data includes camera and LiDAR data, along with tasks such as 2D and 3D tracking and 3D semantic segmentation.

That is a useful reminder that this segment is not dealing with simple one-pass annotation jobs. It is dealing with richer perception data that often requires more specialized tooling, tighter review, and repeated revisiting of the same underlying datasets.

As a result, the storage layer matters more here than in lighter annotation workflows, because the data often needs to remain available for review, relabeling, and future model iteration.

3. Archived data becomes an unproven liability at scale

Once autonomous vehicle and robotics datasets start to accumulate, the challenge is no longer just where they are stored. It is whether teams can trust that the data will still be usable when they need it again.

Older data does not always stay active, but it rarely becomes irrelevant. Teams may need to restore past video clips, LiDAR scans, sensor logs, or labeled datasets for QA, relabeling, model iteration, edge-case review, audit support, or incident investigation.

This creates a restore confidence gap: the gap between believing archived data is safe and being able to verify that it is still intact, recoverable, and tied back to the correct source material or dataset version. That gap matters because many systems treat archive integrity as an assumption. Data is written, retained, and expected to be available later. But in high-stakes AV and robotics workflows, teams may eventually need to prove:

- Can the original data be restored?

- Does it match what was originally stored?

- Is it tied to the right version, label, or model workflow?

- Has it remained intact over time?

This is where storage becomes more than a cost center. Cost, retrieval fees, and retrieval speed still matter, but the deeper operational problem is confidence. Cheap storage is not enough if archived data becomes difficult to verify, expensive to restore, or unreliable when needed.

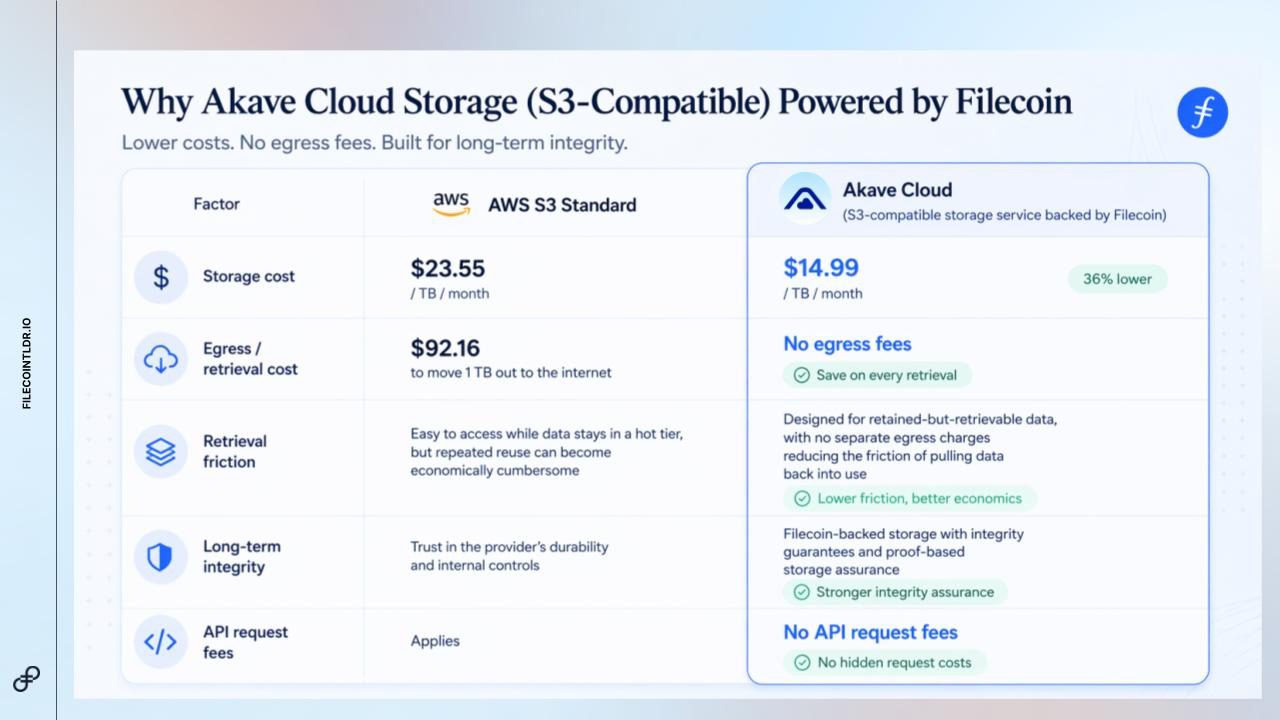

A simple comparison helps make the issue more concrete. Consider AWS S3 Standard alongside Akave Cloud, an S3-compatible storage service backed by Filecoin:

Akave Cloud shows how Filecoin-backed storage can be packaged in a familiar cloud interface. But the stronger point is not just cost. It is verification. Filecoin is designed around a proof-based model where data existence and integrity can be checked over time, rather than simply assumed after upload.

For AV and robotics labeling, that distinction matters. Content addressing and provenance can help tie data back to exact files, versions, or dataset states. A decentralized storage network can also reduce reliance on a single provider or internal system.

The issue, then, is not just that storage gets harder at scale. It is that archived data becomes an unproven liability unless teams can verify that it remains retrievable, intact, and usable. This is where Filecoin’s relevance becomes clearer: it addresses the proof problem, not just the storage problem.

Conclusion

In conclusion, Filecoin’s relevance in autonomous vehicle and robotics labeling becomes clearer when these workflows are understood not just as annotation tasks, but as long-term data infrastructure problems.

Every video clip, LiDAR scan, sensor log, and labeled edge case can remain useful long after the first training run. Teams may need to revisit old labels, reproduce past datasets, investigate model behavior, or retain historical evidence for safety, QA, and audit purposes. In that context, the question is no longer just where the data is stored. It is whether teams can restore it with confidence.

That is where Filecoin’s role becomes more specific. It is not meant to replace every part of the AV or robotics data stack, but to support the retained data layer where durability, retrievability, and verifiability matter most. Through proof-based storage, content addressing, and Filecoin-backed services such as Akave Cloud, teams can begin to treat long-term data retention as something that can be checked and verified over time, not simply assumed.

As AV and robotics datasets continue to grow, the teams that manage their data foundations well will have an advantage. The future will not only depend on who can label data faster, but on who can preserve, restore, and trust the data their models continue to rely on.

Keep exploring Filecoin

- Follow FilecoinTLDR for ecosystem explainers and updates.

- Join the Filecoin Discord to connect with the community.

- Start the Filecoin Quest Hub to learn, complete quests, and get involved.

- Subscribe to the newsletter for future posts and insights.