Overview

There is an overwhelming amount of work going on in the Filecoin ecosystem, and it can be difficult to see how all the pieces fit together. In this blog post, I’m going to explain the structure of Filecoin and various components of the roadmap to hopefully simplify navigating the ecosystem. This blog is organized into the following sections:

- What is Filecoin?

- Diving into the Major Components

- Final Thoughts

This post is intended to be a primer on the major goings-on in Filecoin land; it is by no means exhaustive of everything happening! Hopefully, this post serves as a useful anchor and the embedded links are jumping-off points for the intrepid reader.

What is Filecoin?

My short answer: Filecoin is enabling open services for data, built on top of the IPFS protocol.

IPFS allows data to be uncoupled from specific servers —reducing the siloing of data to specific machines. In IPFS land, the goal is to allow permanent references to data — and do things like compute, storage, and transfer — without relying on specific devices, cloud providers, or storage networks. Why content addressing is super powerful and what CIDs unlock is a separate topic — worthy of its own blog post — that I won’t get into here.

Filecoin is an incentivized network on top of IPFS — in that it allows you to contract out services around data on an open market.

Today, Filecoin focuses primarily on storage as an open service— but the vision includes the infrastructure to store, distribute, and transform data. Looking at Filecoin through this lens, the path the project is pursuing and the bets/tradeoffs that are being taken become clearer.

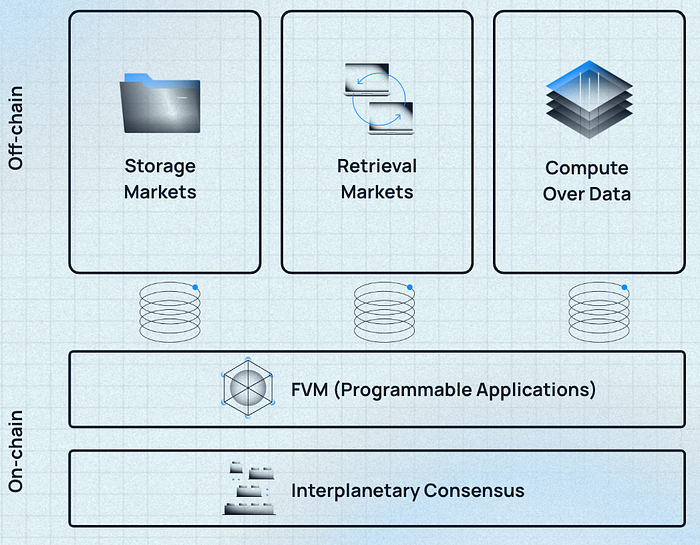

It’s easier to bucket Filecoin into a few major components:

- Storage Market(s): Exists today (cold storage), improvements in progress.

- Retrieval Market(s): In progress

- Compute over Data (Off-chain Compute): In progress

- FVM (Programmable Applications): In progress

- Interplanetary Consensus (Scaling): In progress

Diving into the Major Components

Storage Market(s)

Storage is the bread and butter of the Filecoin economy. Filecoin’s storage network is an open market of storage providers — all offering capacity on which storage clients can bid. To date, there are 4000+ storage providers around the world offering 17EiB (and growing) of storage capacity.

Filecoin is unique in that it uses two types of proofs (both related to storage space and data) for its consensus: Proof-of-replication (PoRep) and Proof-of-Spacetime (PoST).

- PoRep allows a miner to prove both that they’ve allocated some amount of storage space AND that there is a unique encoding of some data (could be empty space, could be a user’s data) into that storage space. This proves that a specific replica of data is being stored on the network.

- PoST allows a miner to prove to the network that data from sets of storage space are indeed still intact (the entire network is checked every 24 hrs). This proves that said data is being stored (space) over time.

These proofs are tied to economic incentives to reward miners who reliably store data (block rewards) and severely penalize those who lose data (slashing). One can think of these incentives like a cryptographically enforced service-level agreement, except rather than relying on the reputation of a service provider — we use cryptography and protocols to ensure proper operation.

In summary, the Filecoin blockchain is a verifiable ledger of attestations about what is happening to data and storage space on the network.

A few features of the architecture that make this unique:

- The Filecoin Storage Network (total storage capacity) is 17EiB of data — yet the Filecoin blockchain is still verifiable on commodity hardware at home. This gives the Filecoin blockchain properties similar to that of an Ethereum or a Bitcoin, but with the ability to manage internet-scale capacity for the services anchoring into the blockchain.

- This ability is uniquely enabled by the fact that Filecoin uses SNARKs for its proofs — rather than storing data on-chain. In the same way zk-rollups can use proofs to assert the validity of some batched transactions, Filecoin’s proofs can be used to verify the integrity of data off-chain.

- Filecoin is able to repurpose the “work” that storage providers would normally do to secure our chain via consensus to also store data. As a result, storage users on the network are subsidized by block rewards and other fees (e.g. transaction fees for sending messages) on the network. The net result is Filecoin’s storage price is super cheap (best represented in scientific notation per TiB/year).

- Filecoin gets regular “checks” via our proofs about data integrity on the network (the entire network is checked 24 hrs!). These verifiable statements are important primitives that can lead to unique applications and programs being built on Filecoin itself.

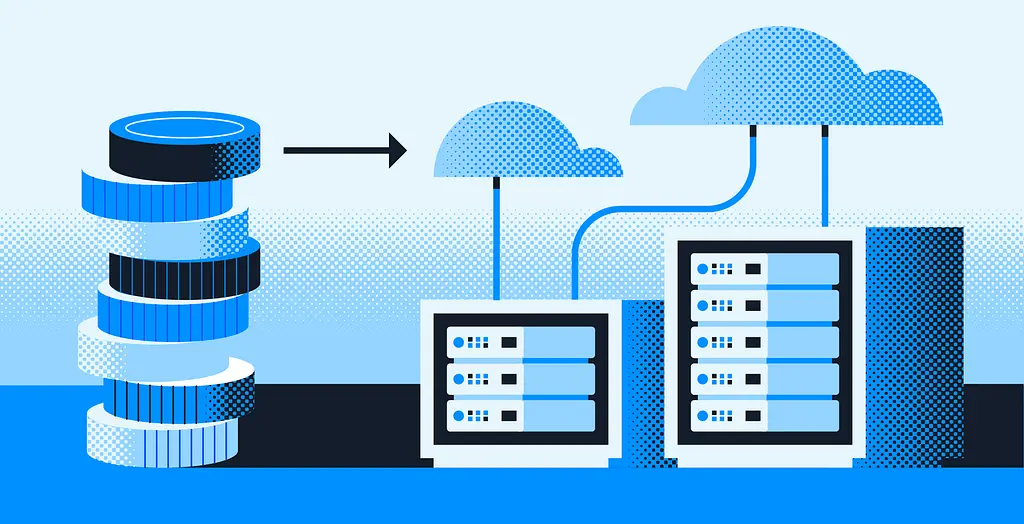

While this architecture has many advantages (scalability! verifiability!), it comes at the cost of additional complexity — the storage providing process is more involved and writing data into the network can take time. This complexity makes Filecoin (as it is today) best suited for cold storage. Many folks using Filecoin today are likely doing so through a developer on-ramp (Estuary.tech, NFT.Storage, Web3.Storage, Chainsafe’s SDKs, Textile’s Bidbot, etc) which couples hot caching in IPFS with cold archival in Filecoin. For those using just Filecoin alone, they’re typically storing large scale archives.

However, as improvements land both to the storage providing process and the proofs, expect more hot storage use cases to be enabled. Some major advancements to keep an eye on:

- ✅ SnapDeals — coupled with the below, storage providers can turn the mining process into a pipeline, injecting data into existing capacity on the network to dramatically lessen time to data landing on-chain.

- 🔄 Sealing-as-a-service / SNARKs-as-a-service — allowing storage providers to focus on data storage and outsource expensive computations to a market of specialized providers.

- 🔄 Proofs optimizations — tuning hardware to optimize for the generation of Filecoin proofs.

- 🔄 More efficient cryptographic primitives — reducing the footprint or complexity of proof generation.

Note: All of this is separate from the “read” flow — which techniques for faster reads exist today via unsealed copies. However, for Filecoin to get to web2 latency we will need Retrieval Market(s), discussed in the next section.

Retrieval Market(s)

The thesis with retrieval markets is straightforward: at scale, caching data at the edge via an open market can solve for the speed of light problem and result in performant delivery at lower costs than traditional infrastructure.

Why might this be the case? The argument is as follows:

- The magic of content addressing (using fingerprints of content as the canonical reference) means data is verifiable.

- This maps neatly to building a permissionless CDN — meaning anyone can supply infrastructure and serve content — as end users can always verify that the content they receive back is the content they requested (even from an untrusted computer).

- If anyone can supply infrastructure into this permissionless network, a CDN can be created from a market of edge-caching nodes (rather than centrally planning where to put these nodes) and use incentive mechanisms to bootstrap hardware — leading to the optimal tradeoff on performance and cost.

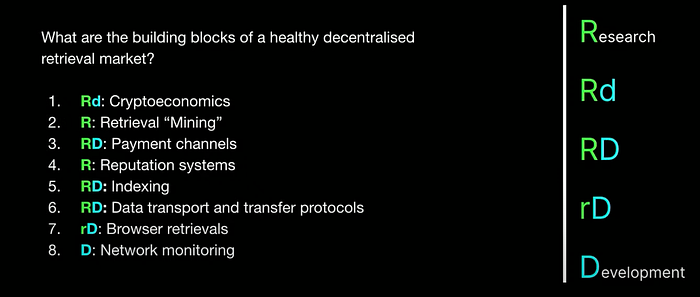

The way retrieval markets are being designed on Filecoin, the aim is not to mandate a specific network to be used — rather to let an ecosystem evolve (e.g. Magmo, Ken Labs, Myel, Filecoin Saturn, and more) to solve the components involved with building a retrieval market.

This video is a good primer on the structure and approach of the working group and one can follow progress here.

Note: Given latency requirements, retrievals happen off-chain, but the settlement for payment for the services can happen on-chain.

Compute over Data (Off-chain Compute)

Compute over data is the third piece of the open services puzzle. When one thinks of what needs to be done with data, it’s typically not just storage and retrieval — users also want to be able to transform the data. The goal with these compute over data protocols are generally to perform computation over IPLD.

For the unfamiliar, IPLD aims to be the data layer for content-addressed systems. It can be used to describe a filesystem (like UnixFS which IPFS uses), Ethereum data, Git data, — really anything that is hash linked. This video might be a helpful primer.

The neat thing about IPLD being generic is that it can be an interface for all sorts of data — and by building computation tools that interact with IPLD, we reduce the complexity for teams building these tools to have their networks be compatible with a wide range of underlying types of data.

Note: This should be exciting for any network building on top of IPFS / IPLD (e.g. Celestia, Gala Games, Audius, Ceramic, etc)

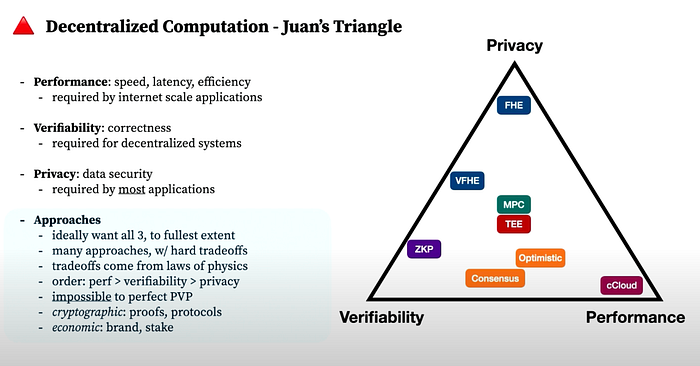

Of course, not all compute is created equal — and for different use cases, different types of compute will be needed. For some use cases, there might be stricter requirements for verifiability — and one may want a zk proof along with the result to know the output was correctly calculated. For others, one might want to keep the data entirely private — and so instead might require fully homomorphic encryption. For others, one may want to just run batch processing like on a traditional cloud (and rely on economic collateral or reputational guarantees for correctness).

There are a bunch of teams working on different types of compute — from large scale parallel compute (e.g. Bacalhau), to cryptographically verifiable compute (e.g. Lurk), to everything in between.

One interesting feature of Filecoin is that the storage providers have compute resources (GPUs, CPUs — as a function of needing to run the proofs) colocated with their data. Critically, this feature sets up the network well to allow compute jobs to be moved to data — rather than moving the data to external compute nodes. Given that data has gravity, this is a necessary step to set the network up to support use cases for compute over large datasets.

One can follow the compute over data working group here.

FVM (Programmable Applications)

Up until this point, I’ve talked about three services (storage, retrieval, and compute) that are related to the data stored on the Filecoin network. These services and their composability can lead to compounding demand for the services of the network — all of which ultimately anchor into the Filecoin blockchain and generate demand for block space.

But how can these services be enhanced?

Enter the FVM — Filecoin’s Virtual Machine.

The FVM will enable computation over Filecoin’s state. This service is critical — as it gives the network all the powers of smart contracts from other networks — but with the unique ability to interact with and trigger the open services mentioned above.

With the FVM, one can build bespoke incentive systems to make more sophisticated offerings on the network:

- DataDAOs

- Retrievability Oracles

- Perpetual storage contracts / storage endowments

- Repair bounties

- Undercollateralized lending markets for storage providers

- ETL pipelines

- … and so much more

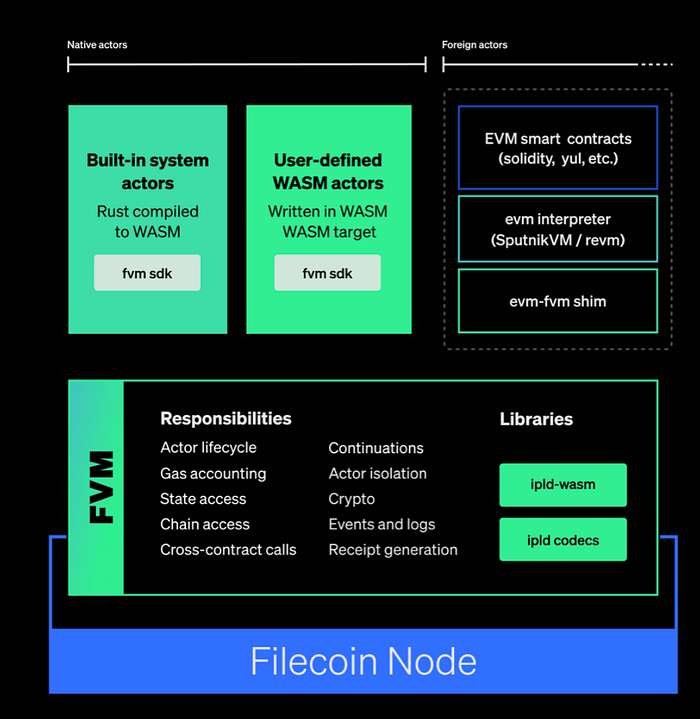

Filecoin’s virtual machine is a WebAssembly (WASM) VM designed like a hypervisor. The vision with the FVM is to support many foreign runtimes, starting with the Ethereum Virtual Machine (EVM). This interoperability means Filecoin will support multiple VMs — on the same network contracts designed for the EVM, MoveVM, and more can be deployed.

By allowing for many VMs, Filecoin developers can deploy hardened contracts from other ecosystems to build up the on-chain infrastructure in the Filecoin economy, while also making it easier for other ecosystems to natively bridge into the services on the Filecoin network. Multiple VM support also allows for more native interactions between the Filecoin economy and other L1 economies.

The FVM is critical as it provides the expressiveness for people to deploy and trigger custom data services from the Filecoin network (storage, retrieval, and compute). This feature allows for more sophisticated offerings to be built on Filecoin’s base primitives, and expand the surface area for broader adoption.

Note: For a flavor of what might be possible, this tweet thread might help elucidate how one might use smart contracts and the base primitives of Filecoin to build more sophisticated offerings.

Most importantly, the FVM also sets the stage for the last major pillar to be covered in this post: interplanetary consensus.

One can follow progress on the FVM here, and find more details on the FVM here.

Interplanetary Consensus (Scaling)

Before diving into what interplanetary consensus is, it’s worth restating what Filecoin is aiming to build: open services for data (storage, retrieval, compute) as credible alternatives to the centralized cloud.

To do this, the Filecoin network needs to operate at a scale orders of magnitude above what blockchains are currently delivering:

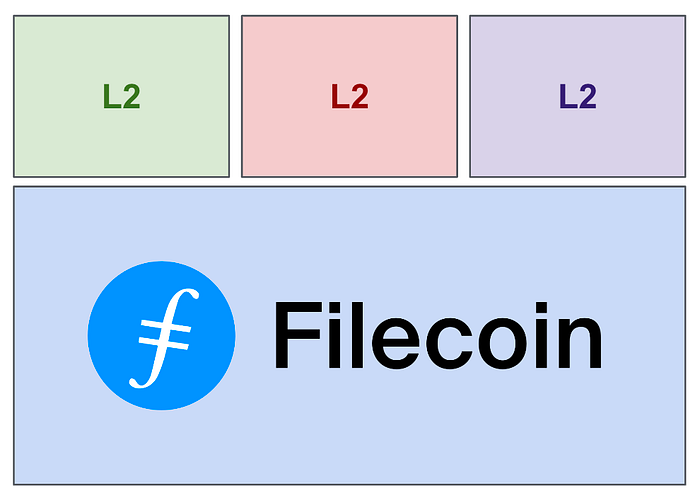

Looking at the above requirements, it may seem contradictory for one chain to target all of these properties. And it is! Rather than trying to force all these properties at the base layer, Filecoin is aiming to deliver these properties across the network.

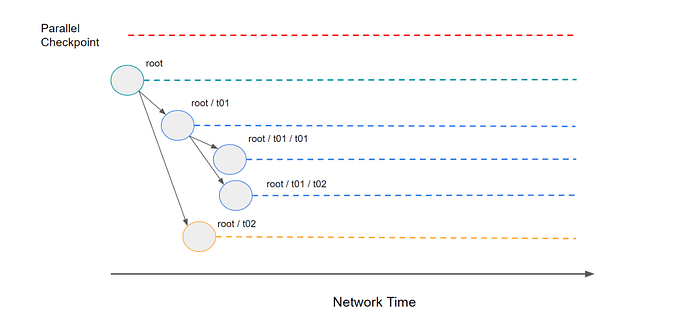

With interplanetary consensus, the network allows for recursive subnets to be created on the fly. This framework allows each subnet to tune its own trade off between security and scalability (and recursively spin up subnets of its own) — while still checkpointing information to their respective parent subnets.

This setup means that while Filecoin’s base layer can be highly secure (allowing many folks to verify at home on commodity hardware) — Filecoin can have subnets that are natively connected that can make different trade offs, allowing for more use cases to be unlocked.

A few interesting properties based on how interplanetary consensus is being designed:

- Each subnet can spin up their own subnets (enabling recursive subnets)

- Native messaging up, down, and across the tree — meaning any of these subnets can communicate with each other

- Tunable tradeoffs between security and scalability (each subnet can choose their own consensus model and can choose to maintain their own state tree).

- Firewall-esque security guarantees from children to parents (think of each subnet as being like a limited liability chain up to the tokens injected from the perspective of the parent chain).

To double click on some of the things interplanetary consensus sets Filecoin up for:

- Because subnets can have different consensus mechanisms, interplanetary consensus opens the door for subnets that allow for native communication with other ecosystems (e.g. a Tendermint subnet for Cosmos).

- Enabling subnets to tune between scalability and security (and enabling communications to subnets that make different trade offs) means Filecoin can have different regions of the network with different properties. Performant subnets can get hyper fast local consensus (to enable things like chat apps) — while allowing for results to checkpoint into the highly secure (and verifiable and slow) Filecoin base layer.

- In a very high throughput subnet (a single data center, running a few nodes) — the FVM/IPVM work could be used to simply task schedule and execute computation directly “on-chain” — with native messaging and payment bubbling back up to more secure base layers.

Learn more by reading this blogpost and following the progress of ConsensusLab. This Github discussion may also be useful to contextualize IPC vs L2s.

Final Thoughts

So, after reading all the above, hopefully clearer what Filecoin is — and how it’s not exactly like any other protocol out there. Filecoin’s ambition is not just to be a storage network (as Tesla’s ambition was not to just ship the Roadster) — the goal is to facilitate a fully decentralized web powered by open services.

Compared to most other web3 infra plays, Filecoin is aiming to be substantially more than a single service. Compared to most L1s, Filecoin is targeting a set of use cases that are uniquely enabled through the architecture of the network. Excitingly, this means rather than competing for the same use cases, Filecoin can uniquely expand the pie for what can actually be done on crypto rails.

Disclaimer: Personal views, not reflective of my employer nor should be treated as “official”. This is my distillation of what Filecoin is and what makes it different based on my time in the ecosystem. Thanks to @duckie_han and @yoitsyoung for helping shape this.